CNN Scoop: Trump ate ice cream as news of airstrike on Iranian General broke

Jason Howerton to CNN: “I know you think you’re dunking on @realDonaldTrump, but this makes him look like a f***ing boss.”

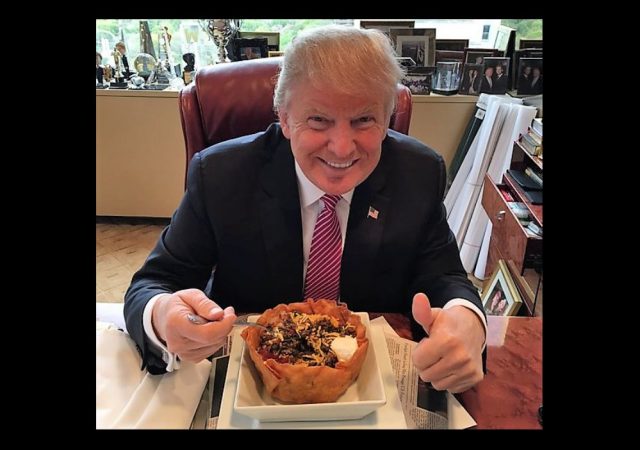

CNN received a lot of mockery when it reported in May 2017 that Trump received two scoops of ice cream at dinners while others got only one scoop.

CNN is back at it, with a report on how President Trump dined on ice cream as news of the airstrike broke:

As news broke that the US struck and killed Qasem Soleimani, President Trump was dining at his Mar-a-Lago club, surrounded by old friends and others like House Minority Leader Kevin McCarthy.

As meatloaf and ice cream were served, the Pentagon confirmed that the US was behind the strikes, the only statement from the administration throughout the night.

Jason Howerton put CNN’s reporting in perspective:

I know you think you’re dunking on @realDonaldTrump, but this makes him look like a fucking boss.

the dude incinerated a terrorist and then was like “good, now get me some ice cream” That’s some America shit right there.

I know you think you're dunking on @realDonaldTrump, but this makes him look like a fucking boss. https://t.co/BbAAYrd7fd

— Jason Howerton (@jason_howerton) January 3, 2020

Amazing that people are actually making themselves upset that Trump ate ice cream.

It defies belief

— Jason Howerton (@jason_howerton) January 3, 2020

DONATE

DONATE

Donations tax deductible

to the full extent allowed by law.

Comments

And here I was wondering if it was chocolate cake.

Damn, he could’ve had cake AND death!

Normally Donald does two scoops–but for Soleimani give him three!?

revenge and ice cream are best served cold?

Welp, I was going to comment on this but Jason Howerton pretty much summed it up.

Ate ice cream? Well, somebody had to.

It’s a messy job. but, someone has to…

Soleimani got his just desserts.

So why can’t Trump have ice cream?

Where was the overlord when 4 Americans were killed in Benghazi?? Not the war room these were staged photos.

I read Jason Howerton to the tune of “Team America”…..seems fitting….

Here’s to TwoScoopsDonny in 2020!

And to Meatloaf Donnie in 2020!

Was it vanilla ice cream? That would be an endorsement of White Power. If it was chocolate, it would be a racist dog whistle. And if it was any other flavor, then it would be shut your misogyny hole you bigot.

Suleimani surprise Swirled with Schumer’s Bitter Tears.

Not plant-based soy alternative enhanced with Manhatten-based organic beta male estrogen.

I hope they left out the cyclamates! That causes cancer in Canadian lab rats.

EVERYTHING causes cancer in Californian lab rats!

Proposition 67 tells us so. Each time I head back out there to visit family, I look in wonder at the Pro 67 signs, and other warning signs mandated by California.

I avoid cancer by avoiding purchasing much of anything while visiting, beyond hotel rooms, meals, and fuel. That way, I won’t get cancer.

And served with 72 raisins as promised, although personally I think the book said prunes.

Nanny Bloomberg was just on the news, stating that as president, he would ban ice cream at the White House.

If it was vanilla then it might be a sign that he is racist.

Iraqi Road.

Winnah!

Meatloaf and ice cream?! Both are good on their own, but together?! Ugh!

I don’t think he had the meatloaf a la mode.

Well, the way it was reported, he did:

As meatloaf and ice cream were served

I doubt it took the Pentagon so long to announce that Trump had time to get through two courses.

(Which, yes, says much more about the inability of modern journalists to write even remotely well than it does about Donny Two Scoops.)

Meatloaf and ice cream . . . so which one did he put ketchup on? Maybe the media want to hold that bombshell under their hats until closer to the election.

Nixon was alleged to have used it on cottage cheese, and the press made a big deal out of it.

(Probably, the Hollywood personalities call it fromage a la maisonette rustique.)

CNN is back at it

As news broke

The way those two bits displayed on my screen, I first thought it said that he had broken CNN’s back. And I thought “Finally!”

I hope he took *three* scoops!

But we’re missing the really important part: what flavor ice cream was it?

And with a cherry on top we can hope!

‘I’m here to eat ice cream and drone terrorists. And I just ate the last of the ice cream…’ PDJT Jan 2, 2020

Rocky Road, no doubt?

Okay. I’ll bite. What then is an apropriate meal after killing the two most dangerous terrorists on the planet? Was ice cream too humble? Should Trump have ordered a gala feast instead? Or would that have offended the vegans?

I’m sure Ben and Jerry’s will come up with an appropriate flavor.

Droney Spumoni … maybe?

Jihadi Splat

Buttscorch Surprise

You’re supposed to watch the drone feed while eating popcorn, of course.

I guess Trump isn’t a micromanager.

A little Tabasco on the meatloaf sets it up real nice for neopolitan(rainbow–lgbt) ice cream

Biscotti with a cup of French Roast.

That said, the Iranians are dismayed with our withdrawal of quid pro Bos. Scalpel reciprocity. #NoSocialJustice

You remember the picture of Obama sitting in the Situation Room when Osama bin laden was killed? In the picture, Obama’s the smallest, least important-looking person in the room and appears to be very out of place. He would’ve looked better if he were eating some ice cream.

gutsy decision?

Well……..

That’s why people like Barrack are called “puppets.”

The only question here is two scoops or one?

CNN is a clown show.

Democrats should look on the bright side: Soleimani is now registered to vote in Chicago, New York City, New Orleans, Austin, the state of Arizona, every state in the Northeast, Washington DC, the Rio Grande valley, and the People’s Democratic Republic of California.

No, NOT in California – he couldn’t vote here ’cause he’d be an alien…. never mind….

Every day, everyone at CNN gets two scoops of stupid, and out of sight they get ten scoops of payoffs.

That rats.

At least one person on Twitter has found a way to turn Democrats against Soleimani:

https://mobile.twitter.com/MattsIdeaShop/status/1213187727375527936?

That’ll do it!

Don’t upset the “Friends” TV fans.

Not only did he eat ice cream, he had two scoops! You can’t fault a guy for wanting two scoops of ice cream after icing a terrorist.

Anyone seen much about this?

World News

January 3, 2020 / 5:16 PM / Updated 3 hours ago

Air strikes targeting Iraqi militia kill six: army source

BAGHDAD (Reuters) – Air strikes targeting Iraq’s Popular Mobilization Forces umbrella grouping of Iran-backed Shi’ite militias near camp Taji north of Baghdad have killed six people and critically wounded three, an Iraqi army source said late on Friday.

Two of the three vehicles making up a militia convoy were found burned, the source said, as well as six burned corpses. The strikes took place at 1:12 am local time, he said.

https://www.reuters.com/article/us-iraq-security-blast-taji/air-strikes-targeting-iraqi-militia-kill-six-army-source-idUSKBN1Z229P?__twitter_impression=true

Gotta say…that’s COOL under fire.

The perps burn while POTUS chills…and heartburn toasts the media.

real men and women recognize and understand what this was: an inevitable reprisal for an attack on our countrymen and other innocents

with one stroke, POTUS has restored consequences in a region so long without them–the reaction of the iranian people is proof enough–POTUS has re-established in the minds of our terrorist adversaries that, while they may be able to steam roll their subjects in their own little fiefdoms, should they be foolish enough to attack innocent americans they’ll indeed be risking their lives to do so–believe this action will re-establish respect for americans/our embassies not only in the ME but throughout the world

But HRC going back to sleep while a US ambassador was tortured to death is perfectly okay? I really do not grock leftists.

Ice cream is always a celebration, no matter what’s going on. Why not celebrate taking out a terrorist??

I would have preferred to have President Trump attending the baptism of his nephew as his enemies are eliminated all over the planet. But failing that, having two scoops of ice cream will have to do.