UK Election: Will Conservatives, Theresa May LOSE Seats in the House of Commons?

One poll says yes, while another says Conservatives will gain enough to form a government.

A new poll has shown that British Prime Minister Theresa May and her fellow conservatives face a massive loss in seats in the snap election scheduled for June 8. YouGov released a poll that shows Conservatives at 310 seats, down from the 330 they hold now in the House of Commons. They need 326 seats in order to form the government. The poll showed Labour could have 257 seats, up from the 229 seats they hold now.

Yet, other polls have shown Conservatives with a comfortable lead while one Professor Jacobson saw have the Conservatives blowing everyone else out of the water.

Can we trust polls? First off, in 2015, pollsters received a shock when the Conservatives came out on top in the general election, which caused the British Polling Council to perform an inquiry into polls and the result. Then 2016 brought doubt to polls after the Brexit vote and President Donald Trump demolished failed Democrat presidential candidate Hillary Clinton. Also, YouGov used a different methodology to find the stats.

Tonight: we reveal YouGov's first seat by seat projection of the campaign – suggests Tories fall 16 seats short of overall majority pic.twitter.com/8ouPRHTZ7m

— Sam Coates Sky (@SamCoatesSky) May 30, 2017

The YouGov poll took the UK by storm, especially since it shows such a narrow lead for the Conservatives. But others felt shocked that a polling company would even predict the seats in the House, especially using a brand new method. Alan Travis, a home affairs editor at The Guardian, called the move brave:

In language echoing Yes Minister’s Sir Humphrey Appleby, leading pollsters have described YouGov’s “shock poll” predicting a hung parliament on 8 June as “brave” and the decision by the Times to splash it on its front page as “even braver”.

It is certainly rare for a polling company to produce a seats prediction. They usually leave that to psephologists and political scientists. But it is even more unusual for a company to suddenly employ a new polling model 10 days before a British general election.

The Guardian explained YouGov’s methods:

The methodology involved is described as “multi-level regression and post-stratification” analysis and is based on a substitute for traditional constituency polling, which it regards as “prohibitively expensive”. [YouGov Chief Executive Stephen] Shakespeare claimed YouGov tested it during last year’s EU referendum campaign and it produced leave leads every time. What a shame YouGov did not feel like sharing it with voters while their own published referendum polls showed a remain lead right up to polling day.

Chief scientist Doug Rivers boasted the new model since it provides “estimates for small geographies.” He wrote:

The idea behind MRP is that we use the poll data from the preceding seven days to estimate a model relating interview date, constituency, voter demographics, past voting behaviour, and other respondent profile variables to their current voting intentions. This model is then used to estimate the probability that a voter with specified characteristics will vote Conservative, Labour, or some other party. Using data from the UK Office of National Statistics, the British Election Study, and past election results, YouGov has estimated the number of each type of voter in each constituency. Combining the model probabilities and estimated census counts allows YouGov to produce a fairly accurate estimate of the number of voters in each constituency intending to vote for a party on each day.

The Guardian also noted that YouGov’s poll had “an electrifying and ultimately erroneous lead” for the yes campaign for the Scottish independence referendum. The group also had the Conservatives and Labour at an almost tie in the general election in 2015, but in the end the Conservatives won big time.

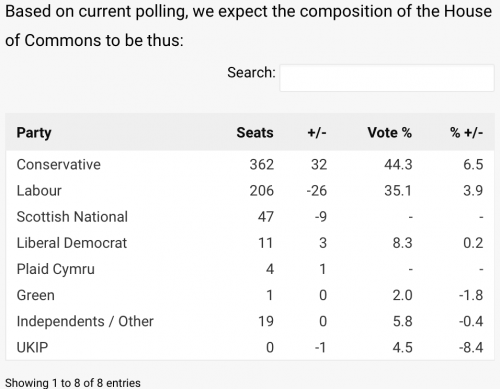

BritainElects.com has a completely different outcome:

That’s quite a bit of a difference isn’t it?

This poll has the Conservatives doubling their seats in the House of Commons, thus giving them enough of a majority to form a government. Here’s the methodology this organization uses:

The methodology combines data from our poll of polls polling model, representative surveys of the regions, constituent nations (Scotland and Wales), constituencies, parliamentary by-elections and historical results. The first layer of our model, simply, operates on a uniform national swing according to our poll of polls. The second layer involves modifications to UNS so as to account for regional variations. The third and final layer involves the randomisation of expected share changes – within the 4pt margin of error – to project whether X, Y or Z party wins a certain constituency in a given number of tests.

Example: According to polling, Party A is expected in Region X to improve upon their support by 4.8pts on the 2015 election result. Constituency Y, which is within that region, should therefore see support for that party improve by between 0.8pts and 8.8pts. The model performs a repeated number of tests in that constituency, randomising an improvement in support between those two shares. These tests determine the probability as to how likely the seat will change hands.

This model does not account for the potential concentration of resources or issues within certain constituencies. A Lib Dem focus on Vauxhall, for example, or a Plaid Cymru one on the Rhondda, will not show up in our forecast.

DONATE

DONATE

Donations tax deductible

to the full extent allowed by law.

Comments

Looks like a nonsense push-poll to me.

This is a redo of the Brexit vote. May exits and Corbyn returns to the EU. The vote will stay the same I think.

Given that:

1) The Conservatives called this election.

and

2) The Labor Party leaders screamed bloody murder when they did.

I’m going to predict that the Conservatives will pick up seats.

No polling data was consulted in reaching this conclusion.

No, it doesn’t. As anyone can see just by looking up, the poll has them increasing their numbers by just under 10%, not 100%. In fact it would be impossible for that to happen, since they already have a majority, so doubling would require them to win more seats than exist!

Is it likely that the slaughter of those attending the concert in Manchester by Muslims is likely to affect the election?